Key Takeaway

Generation-based AI documentation fails at step 1 of a CRA SR&ED review because it can't produce the contemporaneous evidence CRA asks for. Capture-based documentation is built to answer that question from day one.

The first letter from CRA arrives in a tan envelope. The wording is calm. The agency is conducting a review of your SR&ED claim and would like to schedule an initial discussion. The Research and Technology Advisor assigned to the file is named in the letter, along with their contact information. Nothing in the letter is alarming. Nothing in the letter is reassuring either.

What happens next is a defined process, and the process has not changed in substance in years. What has changed is the calibration. CRA reviewers in 2026 read documentation differently than reviewers in 2022 did, and the AI screening tools that now run on incoming T661 narratives flag patterns that would have gone unnoticed three years ago. The companies that lose adjustments under the new calibration are not, in most cases, companies whose underlying R&D was ineligible. They’re companies whose documentation cannot defend the claim it makes. AI-prepped claims, particularly ones produced by generation-based tools, are disproportionately in that category.

This article walks through the review step by step, shows the specific moments where AI-generated narratives fall apart, and explains what to do if you can’t answer the questions a reviewer is going to ask. The deeper architectural treatment of why the failures happen is in our cornerstone on AI-prepared SR&ED claims.

What Triggers a CRA SR&ED Review?

CRA does not review every claim. The agency uses a combination of risk-based selection and random sampling. The audit rate runs at around 12% of claims in a given year, but the rate is not uniform across claim types. First-time and large-value claims attract more attention; rapid year-over-year growth in claim value attracts attention; high ratios of SR&ED salary to total payroll attract attention; contractor-heavy claims attract attention; and AI/ML claims have been under elevated scrutiny since 2024.

Two newer triggers matter for AI-prepared documentation specifically. The first is CRA’s screening AI, which now runs on incoming T661 narratives and flags patterns associated with low-quality or fabricated documentation: generic AI prose, missing source linkage, technological-uncertainty claims without articulated knowledge gaps. The second is the Pre-Claim Approval program, which went live April 1, 2026 and asks for contemporaneous documentation as a submission requirement. A claim that fails Pre-Claim Approval before filing is a more efficient outcome than one that fails review after filing.

We covered what triggers reviews at length in our SR&ED audit article. The summary: AI-prepped claims face two layers of scrutiny now. The screening AI and the human reviewer both calibrate to the same standard.

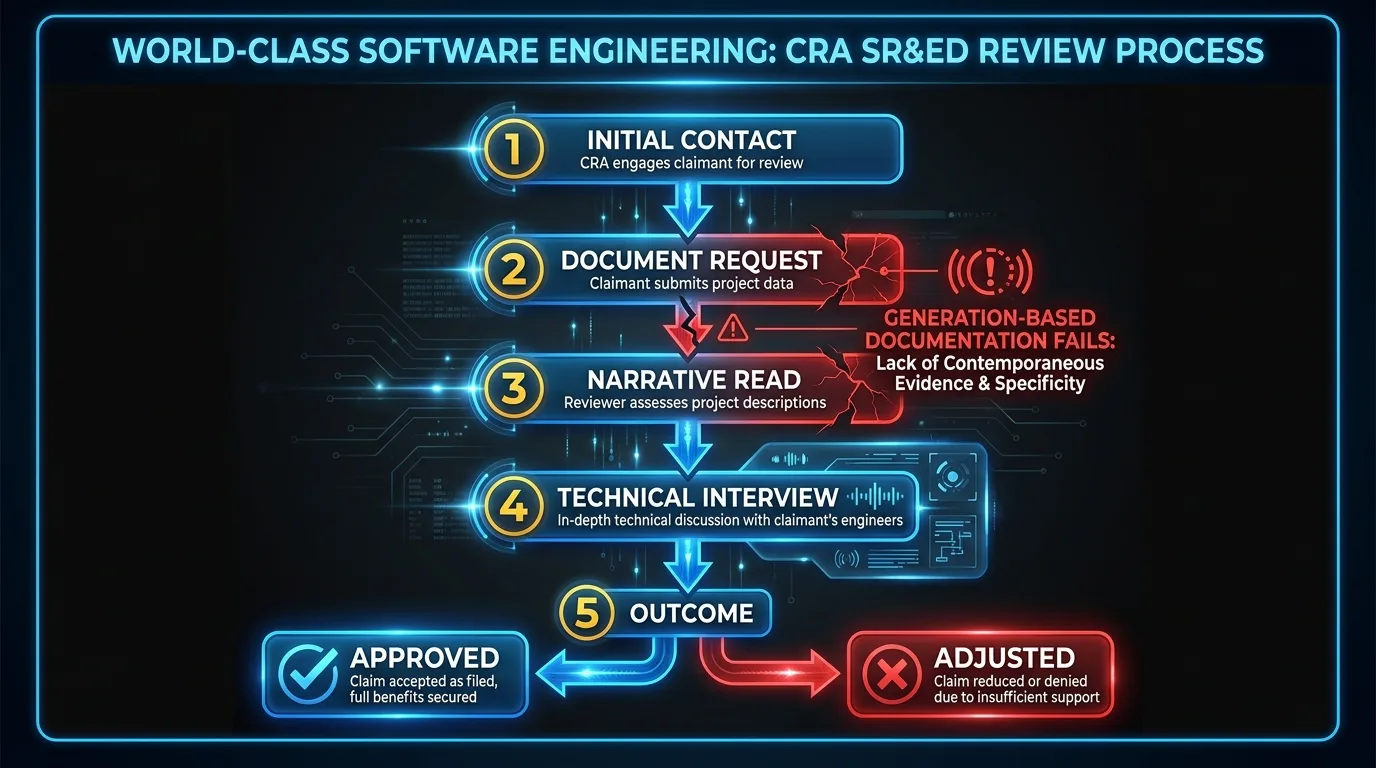

Step 1: Initial Contact and Document Request

The review begins with a letter or phone call from the Research and Technology Advisor (RTA) assigned to your file. The RTA is a technical reviewer, not a tax auditor. Their job is to evaluate whether the work described in your T661 meets the eligibility criteria. The first interaction is procedural: the RTA introduces themselves, explains the scope of the review, and issues a document request.

The document request typically asks for the technical narratives you submitted, project documentation maintained during the claim period, time records supporting your salary allocations, and any contracts with third parties. The phrase that matters is “maintained during the claim period.” The RTA is asking for evidence that pre-dates the filing. They’re not asking for documents you produced after the review notice arrived. They’re asking for the contemporaneous record.

This is the first specific moment where AI-prepped claims start to lose. A generation-based AI tool produces the T661 narrative at filing time, sometimes months after the underlying work. The narrative is the only artifact the company has. The “project documentation maintained during the claim period” is something the engineering team has to find or recreate after the request lands. Some of it exists. Some was never written down. The company starts the review with a documentation gap they didn’t know they had.

A capture-based AI tool produces a different starting position. The contemporaneous documentation is the input to the narrative, not its output. The commits, pull requests, tickets, and design notes are already organized and tied to specific claims. The document request is something the company can answer in days, not weeks.

Step 2: The Narrative Read

The RTA reads the T661 narratives in the file. The reading happens both before and after the AI screen has done its first pass, and the RTA is calibrated against the same expectations the screen is. They’re looking for specificity at every stage of the three-part eligibility test.

For technological uncertainty, the RTA wants to see what was not knowable from existing methods at the start of the work. “We investigated performance optimization approaches” does not articulate a knowledge gap. “We investigated whether a custom hierarchical sharding scheme could maintain p95 query latency below 150ms under concurrent reads exceeding 12,000 RPS, where existing approaches were inadequate for our access pattern” articulates a knowledge gap.

For systematic investigation, the RTA wants to see what was tried, what was observed, what was changed, and what was concluded. “The team conducted iterative experimentation” does not describe an investigation. “The team evaluated four approaches over six weeks. Three failed in specific ways: hot-key promotion degraded long-tail performance, two-tier cache produced inconsistency under concurrent writes, custom sharding alone could not handle hot-key contention. The fourth achieved 142ms p95 at 13,400 RPS in load testing on 2026-02-14” describes an investigation.

For technological advancement, the RTA wants to see the knowledge gap that was closed and the prior art the work moved beyond. “We achieved technological advancement in the area of distributed data processing” does not name an advancement. “The hybrid architecture is not described in existing literature for this specific access pattern, and the test results document a measurable performance gain over the published baselines” names an advancement.

Generation-based AI tools default to the first kind of phrasing in each case. The pattern is structural. The model is filling in plausible specifics from training data, and the safest bet for the model is generic phrasing that works across many possible companies. The RTA reads it and reaches for the credibility-failure heuristics.

Step 3: The “Where Did This Come From?” Question

This is the moment most generation-based claims fail. The RTA picks a specific assertion in the narrative and asks the technical team to produce the source artifacts behind it.

For a generation-based claim, the answer is some version of “we generated the narrative from the project description and our knowledge of the work.” The engineering team can usually find some evidence behind the claim, but the evidence has to be excavated from the codebase after the question is asked. The narrative was not built from the artifacts; the artifacts have to be back-fitted to the narrative. Sometimes the back-fitting works. Sometimes the artifact does not exist because the narrative described work that did not happen quite the way the prose implies. Sometimes the artifact exists but does not match the narrative’s specificity. The RTA notices each gap.

For a capture-based claim, the answer is a single click. The narrative carries provenance metadata for every assertion. The “where did this come from” question lands on a commit hash, a pull request number, or a ticket ID with a date. The RTA opens the artifact, reads it, and confirms that the narrative summarizes what’s in the underlying record. The conversation moves on.

The Tax Court’s ruling in DAZZM Inc. v. The King, 2024 TCC 129 turned on this exact issue: the documentation submitted at the review stage had been substantially reconstructed after the fact, and the court treated the reconstruction as a credibility failure. The case is not about AI. It’s about contemporaneous documentation as a procedural standard. The standard CRA reviewers apply in the technical interview is the same standard the Tax Court applies on appeal.

Step 4: The Technical Interview

In many reviews, CRA requests an interview with the technical team members who performed the SR&ED work. Not the accountant. Not the consultant who prepared the filing. The engineers. The RTA wants to talk to the people who shipped the work, ask them what they were trying to accomplish, what they didn’t know how to do at the start, what approaches they tried, and what they learned.

Engineers who can speak fluently to these questions are convincing. Engineers who can’t recall the work because it was filed by an external consultant who reconstructed it from Jira tickets are not. Generation-based AI tools introduce a third failure mode: engineers who recognize the project but cannot recognize the narrative the AI tool produced. The narrative described the work in terms the engineering team would not have used. The technical specifics in the narrative are not the specifics the engineers remember tackling. The narrative is plausible but not theirs.

Capture-based tools avoid that failure mode because the narrative is summarized from the engineers’ own commits, pull requests, and tickets. The prose is not their writing, but the substance is their work. They recognize it. The interview becomes a conversation about decisions they actually made, with the underlying artifacts on the table. That’s the kind of interview the RTA is calibrated to find convincing.

Step 5: The Outcome

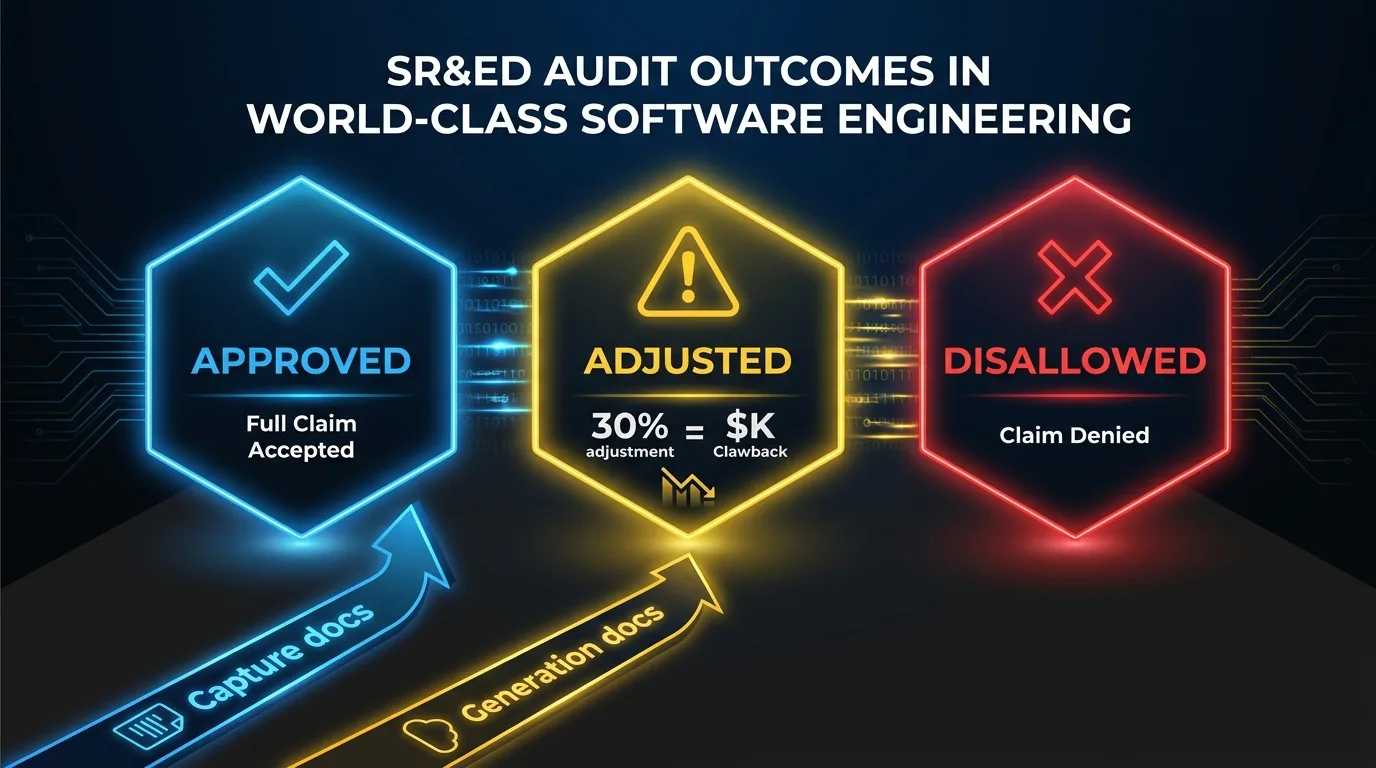

The review results in one of three outcomes: claim approved as filed, claim adjusted (partially disallowed), or claim disallowed entirely. Adjustments are the most common outcome for software companies. CRA typically disallows specific project lines or reduces salary allocations rather than rejecting the whole claim.

Generation-based AI documentation tends to produce adjustments more often, not because the underlying work was ineligible, but because the documentation cannot defend the claim it makes when probed. Project lines that read generically get reduced or removed. Salary allocations attached to project lines that don’t survive the technical review get adjusted accordingly. A 30% adjustment on a $400,000 claim is a $120,000 clawback before professional fees and interest.

Capture-based documentation tends to produce approvals more often, again not because the underlying work is more eligible, but because the documentation answers the questions the review process asks. The narrative is grounded in source artifacts. The artifacts are dated. The technical interview confirms the substance. The RTA finds what they’re calibrated to find: a contemporaneous record of systematic investigation into a technological uncertainty that produced a technological advancement.

You retain the right to object to any outcome. The objection process is formal and involves the appeals division, not the SR&ED audit team. If you have documentation supporting your position, the objection is worth pursuing. If the documentation is thin, the objection is usually a harder fight.

What Do You Do If You Can’t Answer These Questions?

If you read the previous five sections and realized your current SR&ED prep cannot answer the “where did this come from” question, the action is straightforward. Don’t file a 2026 claim with documentation produced primarily from a prompt. Either rebuild the narrative from your actual source data, paragraph by paragraph, with every claim tied to a dated artifact, or run your prep through a capture-based architecture that does the work continuously instead of at filing time.

The first question to ask your tool vendor is the same as the RTA’s: where does every technical claim in our T661 narratives come from? The right answer is a source artifact. The wrong answer is a description of the prompt.

If you’re early in your 2026 cycle and want to see what the alternative looks like, our demo is twenty-five minutes. We’ll connect Lucius to a sample repository and trace a generated paragraph back to the commit it came from. If your repository has the contemporaneous artifacts in it, capture is the architecture that turns those artifacts into a defensible claim.

FAQ

What percentage of SR&ED claims get reviewed by CRA?

About 12% of claims in a given year go through a technical review, but the rate isn’t uniform. First-time filers, large-value claims, rapidly growing claims, and AI/ML claims face higher review rates. The AI screening that now runs on incoming T661 narratives adds another selection layer for claims with generic documentation patterns.

What happens if CRA adjusts my SR&ED claim?

CRA issues a Notice of Reassessment adjusting the credit amount. You have 90 days to object formally. If your documentation is thin, the objection is a harder fight. If it’s strong, the objection has a real chance. Either way, professional fees for the objection process add to the cost of the adjustment. On a $400,000 claim with a 30% disallowance, the clawback alone is $120,000 before those fees.

Can I fix a generation-based SR&ED claim after getting a review notice?

You can supplement the documentation, but you can’t retroactively make it contemporaneous. The problem isn’t the prose quality; it’s that the narrative wasn’t built from dated source artifacts. Some companies successfully object with supplementary technical evidence. Many don’t. Prevention is much cheaper than remediation.

If you can’t answer these questions about your own claim, talk to our team. We help software companies assess whether their SR&ED documentation is contemporaneous, capture-based, and ready for a CRA review.