Key Takeaway

Open-source work qualifies for SR&ED when your team pushes beyond documented capabilities, and the solution wasn't knowable from existing public knowledge.

Software companies building on open source tend to assume none of that work qualifies for SR&ED. The logic sounds reasonable: if the code is public, there’s no technological uncertainty. The answer is already out there.

That reasoning is wrong more often than you’d think. Open-source software is a starting point, not a finished solution. When your engineering team forks a project for a problem the maintainers never anticipated, or integrates multiple OSS components and hits interaction effects that require systematic investigation, the work can meet every part of CRA’s eligibility criteria. The question isn’t whether the base code is public. It’s whether the problem your team solved was answerable from existing, publicly available knowledge.

Most SR&ED advisors ignore this entirely. Developer-led companies leave qualifying work off their claims because the codebase happened to start as an open-source project.

How Does CRA’s “Publicly Available Knowledge” Test Apply?

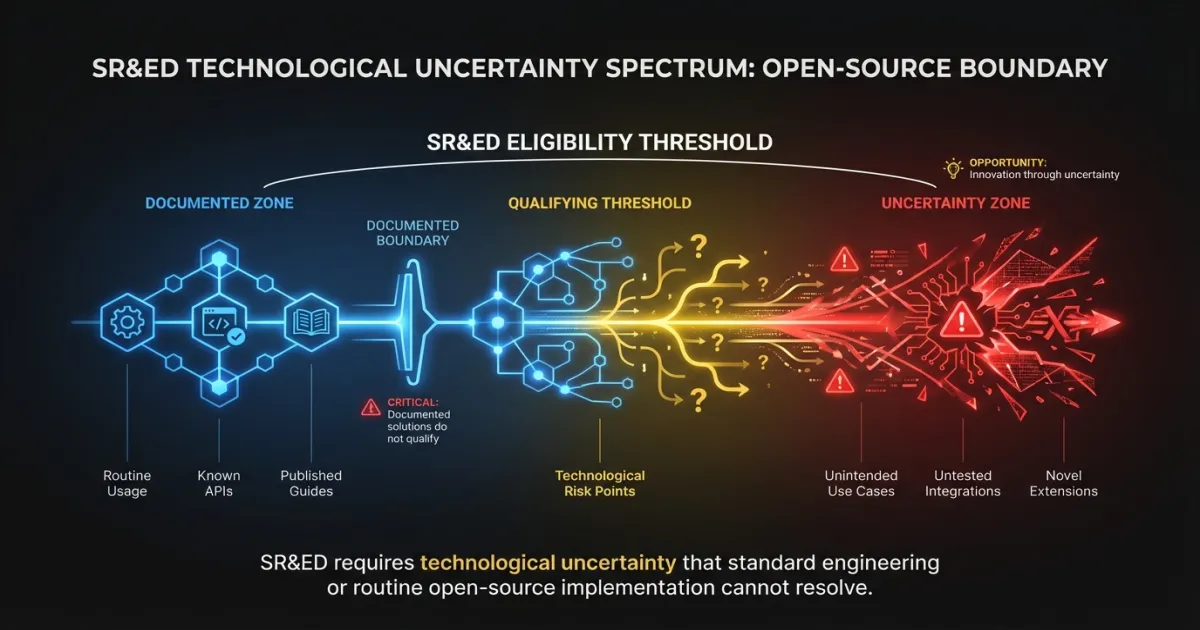

CRA’s eligibility framework hinges on technological uncertainty: could a qualified practitioner have determined the solution using generally available scientific or technological knowledge?

For open-source work, this becomes a specific question: is the solution documented in the project’s existing codebase, docs, issues, or community resources?

If your team followed the project’s docs, used its APIs as intended, and configured it per published guides, that’s routine application of existing knowledge. No uncertainty, no claim.

But open-source documentation has limits. Most projects document their intended use cases. They rarely document what happens when you push the software into an unplanned domain, combine it with other tools in untested configurations, or need it to perform under constraints the original authors didn’t consider. When your team crosses that boundary, they enter genuine technological uncertainty.

The test is objective. It doesn’t matter that your team found the answer and contributed it back. What matters is that the answer was not knowable beforehand.

What Are Five Scenarios Where Open-Source Work Qualifies?

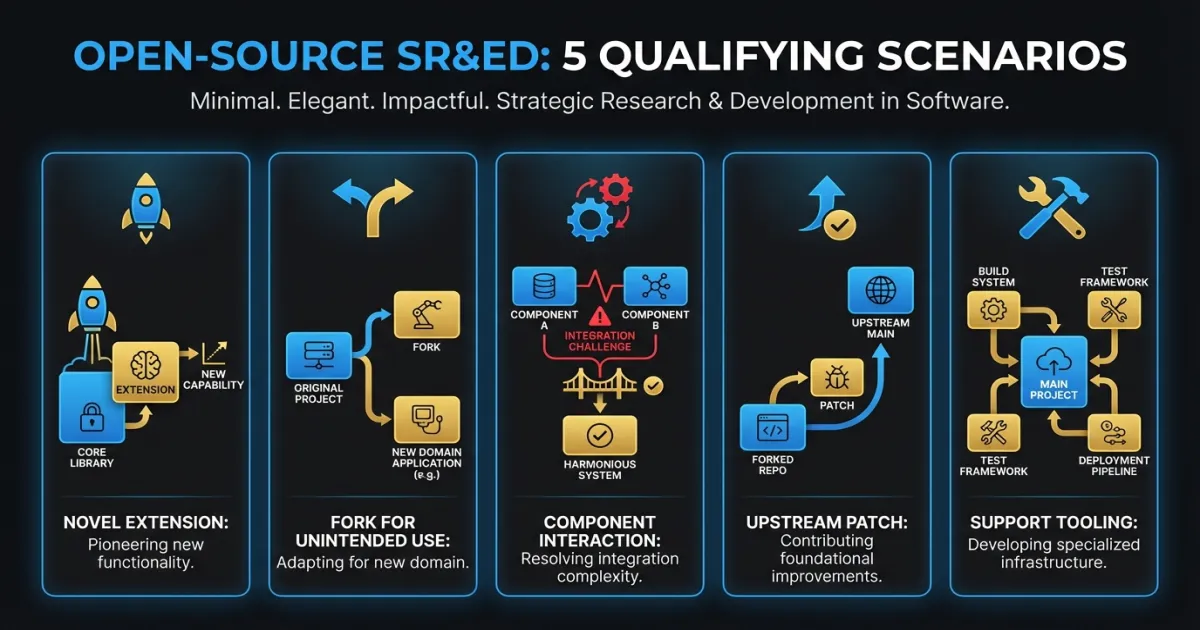

The same three-part test applies to open-source work as to any other SR&ED claim: technological uncertainty, systematic investigation, and technological advancement. Here’s how it plays out.

1. Building a novel extension where no existing solution fits

Your team needs functionality that doesn’t exist in the ecosystem. You’ve searched the plugin registry, the issue tracker, and the community forums. Nothing fits.

Building a standard plugin following the project’s extension API is routine. But when the extension framework itself can’t support what you need, and your team has to investigate whether the underlying architecture can be adapted in ways the project wasn’t designed for, uncertainty enters.

Example: A team building on Apache Kafka needed exactly-once processing guarantees across a multi-region topology with sub-100ms latency. Kafka’s existing exactly-once semantics didn’t cover their cross-region replication scenario. The team spent 10 weeks investigating whether the consumer group protocol could be extended to support their consistency requirements without violating ordering guarantees other consumers depended on. They tested four approaches. Two introduced subtle ordering violations that only appeared under specific partition rebalancing conditions.

That investigation qualifies. The answer wasn’t in the docs. The problem required systematic experimentation. The resulting knowledge advanced understanding of how Kafka’s protocol behaves under constraints the original design didn’t account for.

2. Forking and modifying OSS for an unintended problem

Forking an open-source project and adapting it for a problem the original codebase wasn’t designed for is one of the most common qualifying scenarios. It’s also one of the most overlooked.

The key is the gap between what the software was built to do and what your team needs it to do. If that gap requires investigation, if your engineers can’t predict whether the modifications will work based on existing documentation, the fork becomes an experimental development project.

Example: A computer vision startup forked an inference engine optimized for cloud GPUs and tried to run it on edge devices with constrained memory. The original codebase assumed abundant VRAM and used allocation patterns that caused out-of-memory crashes on target hardware. The team spent 14 weeks rewriting the memory management layer, testing different quantization approaches, and investigating whether accuracy degraded below acceptable thresholds at each compression level. Three of five quantization strategies produced accuracy degradation visible only on specific input distributions.

The fork itself isn’t what qualifies. The systematic investigation required to determine whether and how the software could be adapted for an untested environment is what qualifies.

3. Integrating multiple OSS components with unpredictable interactions

This scenario maps to a principle CRA has recognized in case law. A 2024 Tax Court decision confirmed that combining known components can create technological uncertainty when their interactions produce unpredictable behavior requiring original investigation.

Open-source ecosystems are full of this. Each library works as documented in isolation. Put three or four together and edge cases emerge that nobody anticipated, because nobody tested that specific combination.

Example: A fintech company integrated a message broker, a time-series database, and a stream processing framework for a real-time risk pipeline. Each component worked individually. Under production load, the system showed data inconsistencies that none of the individual projects’ docs addressed. The interaction between the broker’s delivery guarantees, the database’s write buffering, and the stream processor’s checkpointing created a window where risk calculations used stale data. The team spent 8 weeks investigating the interaction, building custom instrumentation to observe timing gaps, and developing a coordination mechanism absent from all three projects.

The individual technologies were known. The interaction was not. That’s the uncertainty CRA looks for.

4. Contributing upstream patches that solve technological problems

When your team solves a genuine technological problem and contributes the solution back, the qualifying work happened before the patch was merged. The investigation, the failed approaches, the systematic testing all occurred while the answer was still unknown.

The fact that the solution becomes public knowledge doesn’t retroactively disqualify the work. CRA evaluates eligibility at the time the work was performed, not when the claim is filed.

Example: A team working with a distributed database discovered its consensus algorithm exhibited split-brain behavior under a specific network partition pattern the test suite didn’t cover. They spent 6 weeks investigating the root cause, testing three fixes, and validating each didn’t introduce regressions in other failure scenarios. They contributed the final patch upstream. The PR was accepted.

The upstream contribution doesn’t affect eligibility in either direction. It doesn’t disqualify the work (the uncertainty existed at the start), and it doesn’t automatically qualify it (the three-part test still applies). What matters is whether the investigation met CRA’s criteria during the period the work was performed. For more on how SR&ED applies across different software scenarios, see our examples guide.

5. Building custom tooling to enable other qualifying R&D

Sometimes the open-source work isn’t the primary R&D project. Your team builds custom tooling around OSS components to enable other qualifying research.

CRA allows “support work” to qualify when it’s directly related to and necessary for eligible SR&ED projects. Custom test harnesses, specialized monitoring tools, or data pipeline infrastructure built specifically to support an R&D investigation can count.

Example: A machine learning team building a novel recommendation system needed to evaluate model performance against user behavior data at a scale their existing infrastructure couldn’t handle. They built a custom evaluation framework on top of Apache Spark, writing specialized operators absent from the Spark ecosystem to simulate user session behavior with realistic timing distributions. The evaluation framework wasn’t the R&D project. The recommendation algorithm was. But the framework was necessary to run the primary investigation’s experiments.

Document this tooling as support work for the parent SR&ED project, not as a standalone claim.

What Open-Source Work Doesn’t Qualify?

Not all open-source work involves technological uncertainty. These activities are routine application of existing knowledge, regardless of engineering effort:

Routine library usage. Installing a package, calling its API per the docs, configuring it per the README. Time-consuming doesn’t mean uncertain.

Standard configuration and deployment. Setting up Kubernetes clusters, configuring CI/CD pipelines, deploying monitoring stacks. These follow documented patterns.

Bug fixes for known issues. If the bug is documented in the issue tracker with a known fix, applying it isn’t SR&ED. If the bug is unknown and requires original investigation to diagnose, that’s different.

Integration following documented patterns. Connecting two services using their published integration guides, even if it takes weeks. Effort isn’t the same as uncertainty.

Performance optimization using known techniques. Profiling and applying established patterns (caching, connection pooling, query optimization) is standard practice. For a deeper look at where the line falls, see our SR&ED examples guide.

How Should You Document Open-Source SR&ED Projects?

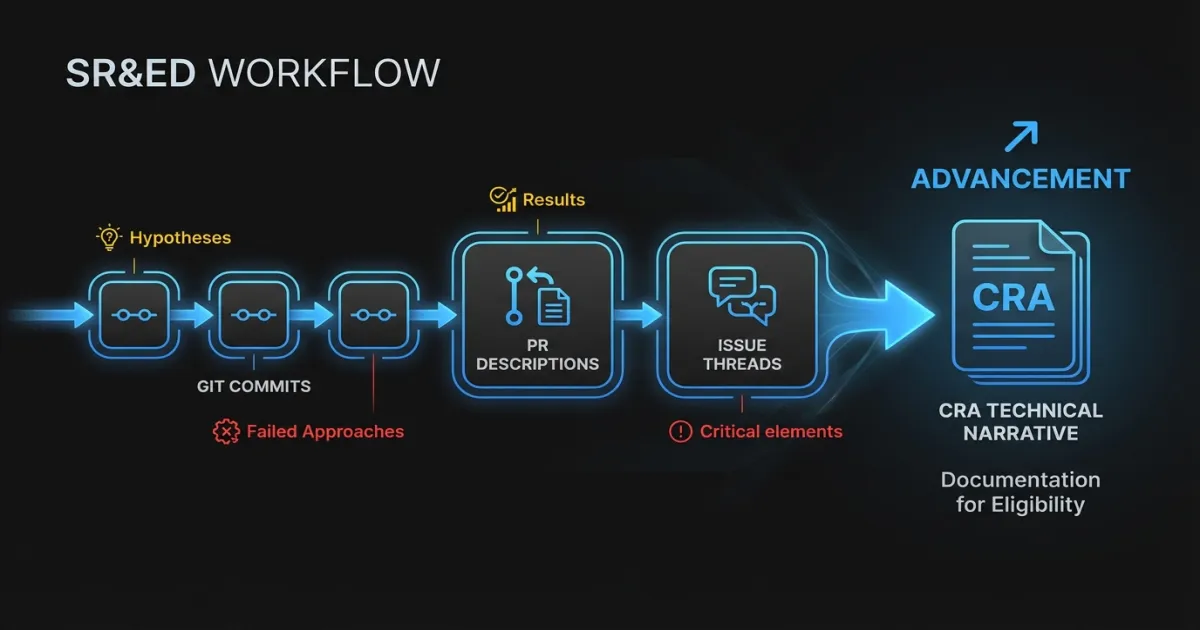

Open-source SR&ED projects have a documentation advantage most teams don’t realize: the evidence trail already exists.

Git history is your lab notebook. Commit messages, branch names, and PR descriptions document the progression of investigation. A branch named experiment/custom-consensus-fix-v3 with 47 commits over 6 weeks tells a story of systematic investigation that CRA reviewers can follow.

PR descriptions capture hypotheses and results. When engineers write “Tried approach X, but it caused Y under condition Z. This PR implements approach W instead,” they’re documenting exactly the hypothesis-test-result loop CRA looks for.

Issue discussions document uncertainty. Threads where engineers debate possible approaches, link to research papers, or explain why the obvious solution won’t work are direct evidence the answer wasn’t knowable from existing knowledge.

The challenge is making this evidence accessible to a CRA reviewer who won’t read your Git log. Your technical narrative needs to translate raw development history into CRA’s language: what was the uncertainty, what hypotheses did you test, what did you learn, and how does the result advance the state of technology.

Practical steps to strengthen your documentation:

- Write commit messages that explain why, not just what. “Reverted approach A due to 3x latency regression under concurrent load” beats “Reverted changes.”

- Use PR descriptions as mini experiment reports. State the hypothesis, the approach, the result, and the next step.

- Tag qualifying work in your project management tool. A simple label like

sred-candidateon tickets involving genuine investigation makes claim preparation far easier. - Preserve dead-end branches. Failed experiments are evidence of systematic investigation. Don’t delete them.

Does Contributing Code Back Affect Your SR&ED Claim?

One concern stops engineering leaders from considering open-source work for SR&ED more than any other: “We don’t own the IP. We contributed the code back. How can it qualify?”

CRA does not require you to own the intellectual property resulting from your investigation. The program evaluates the work performed, not the ownership of the output. If your team conducted eligible experimental development, it qualifies regardless of whether you keep the result proprietary, contribute it upstream, or publish it in a blog post.

This makes sense. The program exists to encourage Canadian companies to take on technological risk. Contributing results to the community doesn’t reduce the risk your team took. It doesn’t undo the investigation, the failed approaches, or the resources invested. SR&ED compensates the R&D activity, not the resulting IP.

Companies that contribute heavily to open source should audit their engineering work for this pattern. If your team is solving problems the community hasn’t solved, documenting their investigation, and advancing the state of technology, that work belongs in your SR&ED claim.

How Do You Get Started?

If your company builds on or contributes to open source, start with a focused review of the past 12 months. Look for projects where your engineering team:

- Spent more than two weeks investigating a problem not addressed in existing documentation

- Forked or significantly modified an OSS project for an unintended use case

- Integrated multiple open-source components and encountered interaction effects requiring original investigation

- Built custom tooling to enable experiments or research

- Contributed patches that solved problems the community hadn’t addressed

For each candidate project, apply the three-part test. Was there genuine technological uncertainty? Did the team investigate systematically? Did the work produce a technological advancement?

Most companies that do this review find qualifying work they’ve been ignoring. The open-source label makes it feel like public knowledge. Often, the work your team did to push that software beyond its documented capabilities is exactly what CRA designed the program to reward.

FAQ

Can I claim SR&ED on open-source work if I contributed the code back?

Yes. CRA evaluates the R&D activity, not IP ownership. If your team conducted eligible experimental development, the work qualifies whether you keep it proprietary or contribute it upstream. The uncertainty existed when you started. The public contribution doesn’t change that.

Does forking an open-source project automatically qualify for SR&ED?

No. A fork only qualifies if the modifications required genuine systematic investigation into a technological uncertainty. Simply adapting a project following its documented extension patterns is routine engineering. The fork qualifies when your team can’t predict whether modifications will work based on existing knowledge and must experiment to find out.

How do I prove technological uncertainty when the base code is public?

Focus your technical narrative on the gap between what the project documents and what your team needed it to do. Show that you searched existing resources (docs, issues, community forums) and the answer wasn’t there. Then document the systematic investigation: hypotheses tested, approaches that failed, and what you learned. Git history, PR discussions, and experiment branches provide strong supporting evidence for an SR&ED audit.

Not sure which open-source projects in your engineering org qualify? Talk to our SR&ED team. We help software companies identify qualifying R&D, structure documentation that survives CRA review, and maximize claims without overreaching.